Based on the automatic operation technology of the browser developed by Key Walker independently, various document files on the Internet It is a service to collect according to rules.

The page information collected by the crawler visiting the target site is extracted by Web scraping service for each item.

For example, in the information collection of the EC site, the category and name of the product, the model number, the price, the number of inventory etc. In collecting sales information, company name, address, telephone number, type of business etc.

Disassemble and organize the information of each item and store it in the database.

Development experience 13 years, 620,000 site above, there is a 10 billion or more pages of crawling experience.

From large to small-scale, it has been introduced as a Web marketing tool of the various companies.

In many companies and organizations as a new infrastructure of the big data era it has been continuously utilized.

Cloud-Ready Cloud-Ready |

Because the cloud-enabled that can be set from anywhere at anytime |

|---|---|

Parallel crawling Parallel crawling |

Also supports crawling tens of millions, several hundreds of million page scale by the parallel crawling |

Crawl corresponding to any site Crawl corresponding to any site |

The browser auto-manipulation language of its own development that Keywalker.js, can also extract information from a dynamic site |

Flexible crawling setting function Flexible crawling setting function |

|

You can acquire a variety of document file format You can acquire a variety of document file format |

HTML、RSS、SITEMAP、PDF、Office、and so on… |

| Tableau cooperation (optional) | The acquired data, in conjunction with Tableau (BI tool) analysis and visualization by |

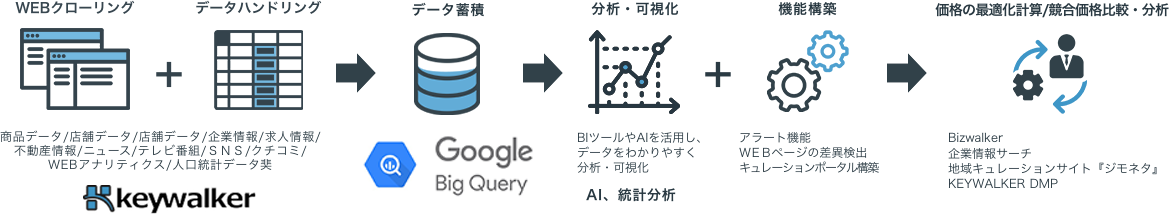

In the key Walker, as well as Web crawling, related to big data,

collection, sorting, analysis, visualization, additional functions, offers a series of solutions to operation.

Hearing investigation of workflow in the field (free of charge)

Rough estimate of when it is automated (free of charge)

Based on the workflow definition, building automation system

The installation process (user PC environment or our cloud environment,)

Get started